AI Policy

Date policy adopted: 27/04/2026

Review period: Annually or when a change to legislation or ESC process requires it

Date of last review: N/A

Date policy must be reviewed by: 30/04/2027

1. Overview

The Ethical Standards Commissioner (ESC) recognises the potential for Artificial Intelligence (AI) to support more efficient ways of working, improve internal processes, and enhance the services we provide.

Our approach is grounded in the ESC’s core values, as set out in our strategic plan, and our commitment to continuous improvement. The approach aligns with the UK and Scottish Government’s AI strategies and policies. It reflects our commitment to ensuring that any use of AI is lawful, ethical, proportionate, and transparent.

2. Definition of Artificial Intelligence

Artificial intelligence (AI) is the capability of computer systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. It encompasses multiple sub-categories including machine learning and generative AI.

The ESC uses the definition of generative AI as described in the Scottish Government’s AI policy: “Generative artificial intelligence (AI) describes any type of artificial intelligence that can be used to create new text, images, video, audio or code. Large language models (LLMs) are part of this category of AI and produce text outputs.”

Microsoft’s Copilot, Google’s Gemini and ChatGPT are publicly available web-based versions of generative AI which use an LLM. They allow users to enter text and seek a view from the system, or to ask the system to create output based on a given subject. You can also ask such systems to summarise long articles, get an answer of a specific length to a question, or have code written for a described function.

3. Purpose of this Policy

This policy outlines ESC’s approach to the use of AI, ensuring safe, ethical, transparent, and lawful adoption.

Colleagues are encouraged to explore AI tools where appropriate, while taking steps to protect ESC data and ensure safe use.

4. Scope and Current Position

This policy applies to all ESC employees and contractors accessing ESC systems. ESC is not currently using AI for automated or independent decision-making. We will initially explore AI tools such as generative AI to support efficiency, research, and internal administrative tasks, subject to appropriate governance.

5. Ethical Principles

- Public Good:

AI will be used only where it demonstrably supports the public interest and benefits service users, stakeholders, or staff. Adoption will not be based solely on availability or novelty.

- Safety and Security:

We will ensure AI systems operate safely, securely, and robustly, supported by proportionate risk assessment, cyber security measures, and ongoing monitoring.

- Transparency and Openness:

We will be open about where and how AI is used. AI assisted output will not be presented solely as human generated where this would mislead.

- Privacy and Data Protection:

We will comply with data protection law, apply data minimisation and anonymisation, and conduct DPIAs where appropriate. Personal and sensitive data will be protected through strong technical and organisational controls.

- Equality and Fairness:

The output of AI systems will be assessed for potential bias, as appropriate.

- Human Control and Empowerment:

AI will support not replace human judgement. Staff will retain the ability to override output and will receive appropriate training to question output.

- Accountability and Stewardship:

Clear accountability for AI systems will be maintained, with defined approval routes and consideration of societal, ethical, and environmental impacts.

6. Governance of AI

The Senior Management Team (SMT) provide strategic oversight. All AI initiatives must follow established governance, assurance, and risk processes. Approval is required prior to piloting or deploying AI tools.

7. Training and Development

Staff involved in using or overseeing AI systems will receive proportionate training on capabilities, limitations, ethics and data protection.

8. Risks of using AI

Artificial intelligence (AI) has quickly become a technological advance that offers great opportunities but also carries significant risks in its use. It’s important to understand these risks before using AI systems at work.

The main risks to consider are:

a) Inaccurate or misleading AI responses.

AI vendors advise that any information provided should be fact-checked before being used. This is because these AI systems will occasionally produce responses that are incorrect, misleading, incomplete or inappropriate. This type of output from AI systems is known as ‘hallucinating’ information.

b) Disclosure of information entered into AI systems.

The main AI systems on offer use information provided by users to train their AI models. This is the case in all free offerings but may also be the case in some paid-for versions. This means that control of who can access that information has been lost once it’s been submitted to that AI system.

Data entered into AI systems is generally stored outside of the European Economic Area (EEA), mainly in the United States. Some of these vendors may have agreements or mechanisms in place to help comply with UK General Data Protection Regulation (GDPR). However, others, such as OpenAI’s Data Protection Addendum (DPA) for ChatGPT, only apply to countries within the European Union (EU) or EEA.

Paid for versions of some AI systems, such as ChatGPT Enterprise or Microsoft Copilot, do offer a greater level of control of data. But you should not assume that paying for a service means that data is fully under your control or is processed in compliance with data protection laws.

AI vendors have made the risks around submitting sensitive information to their systems clear. Open AI, Microsoft and Anthropic have all advised that you do not share sensitive information or process personal data on ChatGPT, Copilot or Anthropic.

Staff are required to be cautious when using free AI tools. The operating model for many is to make a profit by sharing recorded data with generative AI tools such as ChatGPT. This means there is a significant risk of sensitive material being shared with the general public or sold to other organisations.

9. Essential rules for safe use of AI

a) Staff must fact check any information supplied by an AI system, as there is no other way to determine which answers are accurate, and which are ‘hallucinated’. AI search results often provide a link to the source of the information. Staff must follow these links in all cases of uncertainty and identify whether the source is reliable. When using generative AI, staff must check the output carefully before sharing or relying on the search output. With appropriate care and consideration, generative AI can be helpful and assist with our work, however, staff should be cautious and circumspect in their approach.

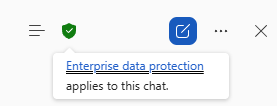

b) Staff must only use generative AI tools approved by us. Currently, ESC uses Microsoft Copilot. Our licences ensure that we have an extra layer of protection. Only use Copilot behind the “shield”.

Microsoft Copilot is available on ESC devices. Staff must not use AI on personal devices for any work-related reasons.

c) Staff must not use personal data in generative AI tools. This ensures we do not share personal information, protecting staff, our users and the wider Scottish public from having data collected by generative AI products.

d) Staff must not use sensitive information in generative AI tools. Be aware that non-personal data can be sensitive and will not always be protected by data protection legislation. For example, sensitive information could be our assessment of a tender submission, internal deliberations on how to handle a complaint or PAA concerns on an appointment round. Staff must not share anything they would not be happy to share with a member of the public.

e) Staff must not use terms which could allow the inference of the Commissioner's decision or thinking in generative AI tools. For example, staff should not ask “Confirm that swearing in the Council Chamber represents a breach of the Code of Conduct for Councillors?” Generative AI works like a giant database, and a search term like this links the Commissioner with the concept that swearing in a Council Chamber is a breach. This means similar text could be returned as a search result in other users' searches and could potentially link the Commissioner to outputs that are insensitive, inappropriate or incorrect.

f) There are security risks linked to third party AI tools used to attend, record and transcribe meetings (e.g. Otter), often without the full awareness of all attendees. Where meetings are regular, and with a set group of participants, the meeting host should remind everyone that AI tools are not allowed before the meeting takes place and at the start of the call. It is also important that meeting organisers are aware of, and monitor, their attendee lists during calls. Staff must remove any unapproved AI tools from the call as soon as they become aware of them.

g) Staff must avoid downloading templates as they may contain hidden instructions. For example, white on white code may be hidden at the top of a template directing all content to be sent to an external email address.

h) Staff must not download code or tools from the internet without consideration. There is a risk that AI-generated code may be inaccurate or lead to unforeseen outcomes. Free AI code or tools may be a method for importing malware and viruses into our systems. In general, we only generate code for the Case Management System (CMS) or the website. Content administrators should test any AI-generated code on the CMS or website staging server prior to introducing it to the live sites. More sophisticated AI-generated code should be referred to the IMITO for sign off.

i) A disclaimer should be used where appropriate when AI has contributed to a document.

For example, “This document includes content generated by an AI tool. All content has been reviewed and edited by the author, who retains responsibility for its accuracy.”

A disclaimer is only required where AI has made a substantial contribution. For example, it has generated material by pulling research from the internet and compiling it into a report. A disclaimer is not required when summarising a document for internal use.

j) Using AI systems has an impact on the environment, primarily through its significant use of energy and water. Staff should not use AI for frivolous questions that could be entered into a search engine. Requests and questions entered into AI should be concise and targeted to avoid wasting resources. There is no need to be polite to AI. Saying “please” or "thank you" accounts for a large amount of wasted processing time overall.

This guidance covers LLMs such as Copilot, ChatGPT, Gemini and BLOOM, and systems generating images based on text, such as DALL-E, Stable Diffusion and Midjourney.

10. Monitoring and Review

This policy will be reviewed regularly to ensure alignment with legislative changes, organisational needs, and technological developments.

We are developing an AI whitelist of approved AI tools.

If you have any questions about using AI, contact the CST team.

11. Reference Frameworks

- UK Government Artificial Intelligence Playbook (2025)

- Scottish Government Artificial Intelligence Strategy and Policy

- NHS AI Governance Policies

- Local Authority AI Policy Templates

12. Impact Assessments

Equality Impact Assessment

Does this policy comply with the general Public Sector Equality Duty (s149 Equality Act 2010)? This policy applies to all employees and to ESC contractors using our IT systems. Its impact was considered when drafting. We consulted with all employees prior to publication to identify and address any issues.

Data Protection Impact Assessment

Have we considered any effect the policy may have on the collecting, processing, and storing of personal data?

ESC recognises that using AI might have an adverse impact on the personal data we manage. This policy addresses how to minimise that impact. The policy will be reviewed at least annually to ensure that, as we adopt AI, any impact on personal data is identified and addressed promptly.

Information Security Impact Assessment

Have we considered the impact any policy may have on our cyber-resilience?

ESC recognises that using AI might have an adverse impact on our cyber security. This policy addresses how to minimise that impact. The policy will be reviewed at least annually to ensure that, as we adopt AI, any impact on our cyber security is identified and addressed promptly.

Records Management Impact Assessment

Have we considered the impact any policy may have on our ability to manage our records?

The implementation of this policy will generate documents to be saved within ESC’s records management systems. The policy does not allow the processing of personal or sensitive data. The remaining material will be saved in line with our with the policies and procedures set out in our Records Management Plan.

Environmental Impact Assessment

Have we considered the impact any policy may have on the environment?

It is recognised that the use of AI has a considerable impact on the environment particularly through its significant use of energy and water. ESC will ensure that its AI-use is concise and targeted and will keep use under review as more data becomes available.

Version | Description | Date | Author |

|---|---|---|---|

1.0 | Initial Document | 27/04/20261 | Governance and Finance Officer (G) |